Update layer1-port-openscienceframework and same for zenodo

This commit is contained in:

parent

9fa2c2faab

commit

0dca8fc4f0

458 changed files with 0 additions and 35168 deletions

|

|

@ -1,23 +0,0 @@

|

|||

# Patterns to ignore when building packages.

|

||||

# This supports shell glob matching, relative path matching, and

|

||||

# negation (prefixed with !). Only one pattern per line.

|

||||

.DS_Store

|

||||

# Common VCS dirs

|

||||

.git/

|

||||

.gitignore

|

||||

.bzr/

|

||||

.bzrignore

|

||||

.hg/

|

||||

.hgignore

|

||||

.svn/

|

||||

# Common backup files

|

||||

*.swp

|

||||

*.bak

|

||||

*.tmp

|

||||

*.orig

|

||||

*~

|

||||

# Various IDEs

|

||||

.project

|

||||

.idea/

|

||||

*.tmproj

|

||||

.vscode/

|

||||

|

|

@ -1,8 +0,0 @@

|

|||

apiVersion: v2

|

||||

name: common

|

||||

description: A Helm chart for Kubernetes

|

||||

type: library

|

||||

version: 0.1.2

|

||||

appVersion: "1.16.0"

|

||||

sources:

|

||||

- https://github.com/Sciebo-RDS/Sciebo-RDS

|

||||

|

|

@ -1,65 +0,0 @@

|

|||

|

||||

{{/*

|

||||

Return the proper image name

|

||||

{{ include "common.image" ( dict "imageRoot" .Values.path.to.the.image "global" $) }}

|

||||

*/}}

|

||||

{{- define "common.image" -}}

|

||||

{{- $registryName := .imageRoot.registry -}}

|

||||

{{- $repositoryName := .imageRoot.repository -}}

|

||||

{{- if .repository -}}

|

||||

{{- $repositoryName = .repository -}}

|

||||

{{- end -}}

|

||||

{{- $tag := .imageRoot.tag | toString -}}

|

||||

{{- if .global }}

|

||||

{{- if .global.image }}

|

||||

{{- if .global.image.registry }}

|

||||

{{- $registryName = .global.image.registry -}}

|

||||

{{- end -}}

|

||||

{{- if .global.image.tag -}}

|

||||

{{- $tag = .global.image.tag | toString -}}

|

||||

{{- end -}}

|

||||

{{- end -}}

|

||||

{{- end -}}

|

||||

{{- if $registryName }}

|

||||

{{- printf "%s/%s:%s" $registryName $repositoryName $tag -}}

|

||||

{{- else -}}

|

||||

{{- printf "%s:%s" $repositoryName $tag -}}

|

||||

{{- end -}}

|

||||

{{- end -}}

|

||||

|

||||

{{- define "common.ingressAnnotations" -}}

|

||||

{{- $annotations := dict -}}

|

||||

{{- with .Values.ingress.annotations }}

|

||||

{{- $annotations = . -}}

|

||||

{{- end -}}

|

||||

{{- if .Values.global }}

|

||||

{{- if .Values.global.ingress }}

|

||||

{{- if .Values.global.ingress.annotations }}

|

||||

{{- $annotations = mustMergeOverwrite .Values.global.ingress.annotations $annotations -}}

|

||||

{{- end -}}

|

||||

{{- end -}}

|

||||

{{- end -}}

|

||||

{{- toYaml $annotations -}}

|

||||

{{- end -}}

|

||||

|

||||

|

||||

{{- define "common.tlsSecretName" -}}

|

||||

{{- $secretName := "" -}}

|

||||

{{- if .Values.ingress }}

|

||||

{{- if .Values.ingress.tls }}

|

||||

{{- if .Values.ingress.tls.secretName }}

|

||||

{{- $secretName = .Values.ingress.tls.secretName -}}

|

||||

{{- end -}}

|

||||

{{- end -}}

|

||||

{{- end -}}

|

||||

{{- if .Values.global }}

|

||||

{{- if .Values.global.ingress }}

|

||||

{{- if .Values.global.ingress.tls }}

|

||||

{{- if .Values.global.ingress.tls.secretName }}

|

||||

{{- $secretName = .Values.global.ingress.tls.secretName -}}

|

||||

{{- end -}}

|

||||

{{- end -}}

|

||||

{{- end -}}

|

||||

{{- end -}}

|

||||

{{- printf "%s" $secretName -}}

|

||||

{{- end -}}

|

||||

|

|

@ -1,21 +0,0 @@

|

|||

# Patterns to ignore when building packages.

|

||||

# This supports shell glob matching, relative path matching, and

|

||||

# negation (prefixed with !). Only one pattern per line.

|

||||

.DS_Store

|

||||

# Common VCS dirs

|

||||

.git/

|

||||

.gitignore

|

||||

.bzr/

|

||||

.bzrignore

|

||||

.hg/

|

||||

.hgignore

|

||||

.svn/

|

||||

# Common backup files

|

||||

*.swp

|

||||

*.bak

|

||||

*.tmp

|

||||

*~

|

||||

# Various IDEs

|

||||

.project

|

||||

.idea/

|

||||

*.tmproj

|

||||

|

|

@ -1,23 +0,0 @@

|

|||

apiVersion: v1

|

||||

appVersion: 1.18.0

|

||||

description: A Jaeger Helm chart for Kubernetes

|

||||

home: https://jaegertracing.io

|

||||

icon: https://camo.githubusercontent.com/afa87494e0753b4b1f5719a2f35aa5263859dffb/687474703a2f2f6a61656765722e72656164746865646f63732e696f2f656e2f6c61746573742f696d616765732f6a61656765722d766563746f722e737667

|

||||

keywords:

|

||||

- jaeger

|

||||

- opentracing

|

||||

- tracing

|

||||

- instrumentation

|

||||

maintainers:

|

||||

- email: david.vonthenen@dell.com

|

||||

name: dvonthenen

|

||||

- email: michael.lorant@fairfaxmedia.com.au

|

||||

name: mikelorant

|

||||

- email: naseem@transit.app

|

||||

name: naseemkullah

|

||||

- email: pavel.nikolov@fairfaxmedia.com.au

|

||||

name: pavelnikolov

|

||||

name: jaeger

|

||||

sources:

|

||||

- https://hub.docker.com/u/jaegertracing/

|

||||

version: 0.34.1

|

||||

|

|

@ -1,10 +0,0 @@

|

|||

approvers:

|

||||

- dvonthenen

|

||||

- mikelorant

|

||||

- naseemkullah

|

||||

- pavelnikolov

|

||||

reviewers:

|

||||

- dvonthenen

|

||||

- mikelorant

|

||||

- naseemkullah

|

||||

- pavelnikolov

|

||||

|

|

@ -1,380 +0,0 @@

|

|||

# Jaeger

|

||||

|

||||

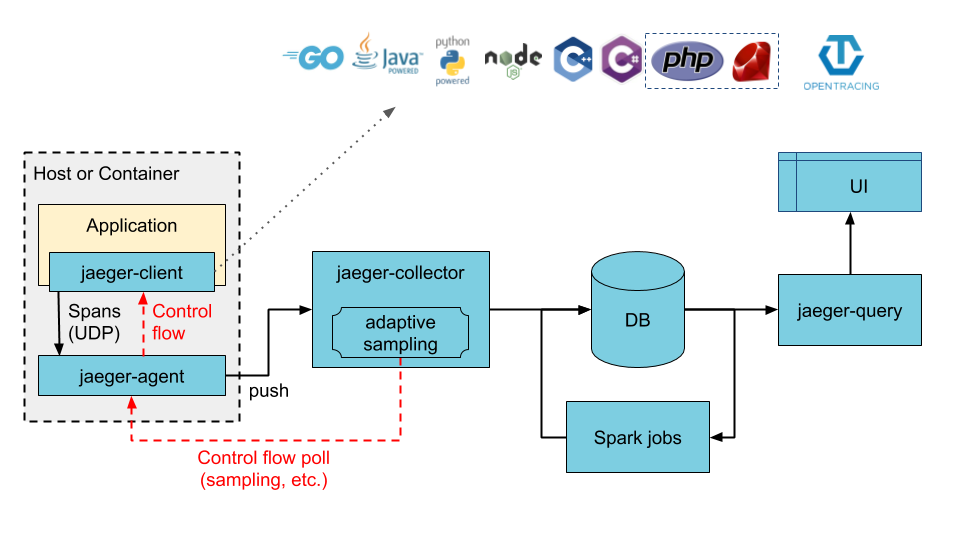

[Jaeger](https://www.jaegertracing.io/) is a distributed tracing system.

|

||||

|

||||

## Introduction

|

||||

|

||||

This chart adds all components required to run Jaeger as described in the [jaeger-kubernetes](https://github.com/jaegertracing/jaeger-kubernetes) GitHub page for a production-like deployment. The chart default will deploy a new Cassandra cluster (using the [cassandra chart](https://github.com/kubernetes/charts/tree/master/incubator/cassandra)), but also supports using an existing Cassandra cluster, deploying a new ElasticSearch cluster (using the [elasticsearch chart](https://github.com/elastic/helm-charts/tree/master/elasticsearch)), or connecting to an existing ElasticSearch cluster. Once the storage backend is available, the chart will deploy jaeger-agent as a DaemonSet and deploy the jaeger-collector and jaeger-query components as Deployments.

|

||||

|

||||

## Installing the Chart

|

||||

|

||||

Add the Jaeger Tracing Helm repository:

|

||||

|

||||

```bash

|

||||

helm repo add jaegertracing https://jaegertracing.github.io/helm-charts

|

||||

```

|

||||

|

||||

To install the chart with the release name `jaeger`, run the following command:

|

||||

|

||||

```bash

|

||||

helm install jaeger jaegertracing/jaeger

|

||||

```

|

||||

|

||||

By default, the chart deploys the following:

|

||||

|

||||

- Jaeger Agent DaemonSet

|

||||

- Jaeger Collector Deployment

|

||||

- Jaeger Query (UI) Deployment

|

||||

- Cassandra StatefulSet

|

||||

|

||||

|

||||

|

||||

IMPORTANT NOTE: For testing purposes, the footprint for Cassandra can be reduced significantly in the event resources become constrained (such as running on your local laptop or in a Vagrant environment). You can override the resources required run running this command:

|

||||

|

||||

```bash

|

||||

helm install jaeger jaegertracing/jaeger \

|

||||

--set cassandra.config.max_heap_size=1024M \

|

||||

--set cassandra.config.heap_new_size=256M \

|

||||

--set cassandra.resources.requests.memory=2048Mi \

|

||||

--set cassandra.resources.requests.cpu=0.4 \

|

||||

--set cassandra.resources.limits.memory=2048Mi \

|

||||

--set cassandra.resources.limits.cpu=0.4

|

||||

```

|

||||

|

||||

## Installing the Chart using an Existing Cassandra Cluster

|

||||

|

||||

If you already have an existing running Cassandra cluster, you can configure the chart as follows to use it as your backing store (make sure you replace `<HOST>`, `<PORT>`, etc with your values):

|

||||

|

||||

```bash

|

||||

helm install jaeger jaegertracing/jaeger \

|

||||

--set provisionDataStore.cassandra=false \

|

||||

--set storage.cassandra.host=<HOST> \

|

||||

--set storage.cassandra.port=<PORT> \

|

||||

--set storage.cassandra.user=<USER> \

|

||||

--set storage.cassandra.password=<PASSWORD>

|

||||

```

|

||||

|

||||

## Installing the Chart using an Existing Cassandra Cluster with TLS

|

||||

|

||||

If you already have an existing running Cassandra cluster with TLS, you can configure the chart as follows to use it as your backing store:

|

||||

|

||||

Content of the `values.yaml` file:

|

||||

|

||||

```YAML

|

||||

storage:

|

||||

type: cassandra

|

||||

cassandra:

|

||||

host: <HOST>

|

||||

port: <PORT>

|

||||

user: <USER>

|

||||

password: <PASSWORD>

|

||||

tls:

|

||||

enabled: true

|

||||

secretName: cassandra-tls-secret

|

||||

|

||||

provisionDataStore:

|

||||

cassandra: false

|

||||

```

|

||||

|

||||

Content of the `jaeger-tls-cassandra-secret.yaml` file:

|

||||

|

||||

```YAML

|

||||

apiVersion: v1

|

||||

kind: Secret

|

||||

metadata:

|

||||

name: cassandra-tls-secret

|

||||

data:

|

||||

commonName: <SERVER NAME>

|

||||

ca-cert.pem: |

|

||||

-----BEGIN CERTIFICATE-----

|

||||

<CERT>

|

||||

-----END CERTIFICATE-----

|

||||

client-cert.pem: |

|

||||

-----BEGIN CERTIFICATE-----

|

||||

<CERT>

|

||||

-----END CERTIFICATE-----

|

||||

client-key.pem: |

|

||||

-----BEGIN RSA PRIVATE KEY-----

|

||||

-----END RSA PRIVATE KEY-----

|

||||

cqlshrc: |

|

||||

[ssl]

|

||||

certfile = ~/.cassandra/ca-cert.pem

|

||||

userkey = ~/.cassandra/client-key.pem

|

||||

usercert = ~/.cassandra/client-cert.pem

|

||||

|

||||

```

|

||||

|

||||

```bash

|

||||

kubectl apply -f jaeger-tls-cassandra-secret.yaml

|

||||

helm install jaeger jaegertracing/jaeger --values values.yaml

|

||||

```

|

||||

|

||||

## Installing the Chart using a New ElasticSearch Cluster

|

||||

|

||||

To install the chart with the release name `jaeger` using a new ElasticSearch cluster instead of Cassandra (default), run the following command:

|

||||

|

||||

```bash

|

||||

helm install jaeger jaegertracing/jaeger \

|

||||

--set provisionDataStore.cassandra=false \

|

||||

--set provisionDataStore.elasticsearch=true \

|

||||

--set storage.type=elasticsearch

|

||||

```

|

||||

|

||||

## Installing the Chart using an Existing Elasticsearch Cluster

|

||||

|

||||

A release can be configured as follows to use an existing ElasticSearch cluster as it as the storage backend:

|

||||

|

||||

```bash

|

||||

helm install jaeger jaegertracing/jaeger \

|

||||

--set provisionDataStore.cassandra=false \

|

||||

--set storage.type=elasticsearch \

|

||||

--set storage.elasticsearch.host=<HOST> \

|

||||

--set storage.elasticsearch.port=<PORT> \

|

||||

--set storage.elasticsearch.user=<USER> \

|

||||

--set storage.elasticsearch.password=<password>

|

||||

```

|

||||

|

||||

## Installing the Chart using an Existing ElasticSearch Cluster with TLS

|

||||

|

||||

If you already have an existing running ElasticSearch cluster with TLS, you can configure the chart as follows to use it as your backing store:

|

||||

|

||||

Content of the `jaeger-values.yaml` file:

|

||||

|

||||

```YAML

|

||||

storage:

|

||||

type: elasticsearch

|

||||

elasticsearch:

|

||||

host: <HOST>

|

||||

port: <PORT>

|

||||

scheme: https

|

||||

user: <USER>

|

||||

password: <PASSWORD>

|

||||

provisionDataStore:

|

||||

cassandra: false

|

||||

elasticsearch: false

|

||||

query:

|

||||

cmdlineParams:

|

||||

es.tls.ca: "/tls/es.pem"

|

||||

extraConfigmapMounts:

|

||||

- name: jaeger-tls

|

||||

mountPath: /tls

|

||||

subPath: ""

|

||||

configMap: jaeger-tls

|

||||

readOnly: true

|

||||

collector:

|

||||

cmdlineParams:

|

||||

es.tls.ca: "/tls/es.pem"

|

||||

extraConfigmapMounts:

|

||||

- name: jaeger-tls

|

||||

mountPath: /tls

|

||||

subPath: ""

|

||||

configMap: jaeger-tls

|

||||

readOnly: true

|

||||

spark:

|

||||

enabled: true

|

||||

cmdlineParams:

|

||||

java.opts: "-Djavax.net.ssl.trustStore=/tls/trust.store -Djavax.net.ssl.trustStorePassword=changeit"

|

||||

extraConfigmapMounts:

|

||||

- name: jaeger-tls

|

||||

mountPath: /tls

|

||||

subPath: ""

|

||||

configMap: jaeger-tls

|

||||

readOnly: true

|

||||

|

||||

```

|

||||

|

||||

Generate configmap jaeger-tls:

|

||||

|

||||

```bash

|

||||

keytool -import -trustcacerts -keystore trust.store -storepass changeit -alias es-root -file es.pem

|

||||

kubectl create configmap jaeger-tls --from-file=trust.store --from-file=es.pem

|

||||

```

|

||||

|

||||

```bash

|

||||

helm install jaeger jaegertracing/jaeger --values jaeger-values.yaml

|

||||

```

|

||||

|

||||

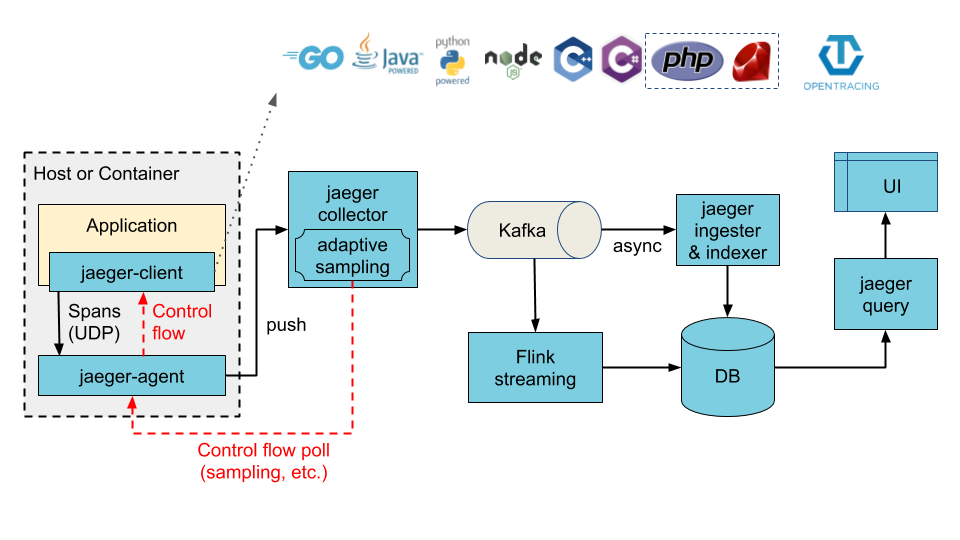

## Installing the Chart with Ingester enabled

|

||||

|

||||

The architecture illustrated below can be achieved by enabling the ingester component. When enabled, Cassandra or Elasticsearch (depending on the configured values) now becomes the ingester's storage backend, whereas Kafka becomes the storage backend of the collector service.

|

||||

|

||||

|

||||

|

||||

## Installing the Chart with Ingester enabled using a New Kafka Cluster

|

||||

|

||||

To provision a new Kafka cluster along with jaeger-ingester:

|

||||

|

||||

```bash

|

||||

helm install jaeger jaegertracing/jaeger \

|

||||

--set provisionDataStore.kafka=true \

|

||||

--set ingester.enabled=true

|

||||

```

|

||||

|

||||

## Installing the Chart with Ingester using an existing Kafka Cluster

|

||||

|

||||

You can use an exisiting Kafka cluster with jaeger too

|

||||

|

||||

```bash

|

||||

helm install jaeger jaegertracing/jaeger \

|

||||

--set ingester.enabled=true \

|

||||

--set storage.kafka.brokers={<BROKER1:PORT>,<BROKER2:PORT>} \

|

||||

--set storage.kafka.topic=<TOPIC>

|

||||

```

|

||||

|

||||

## Configuration

|

||||

|

||||

The following table lists the configurable parameters of the Jaeger chart and their default values.

|

||||

|

||||

| Parameter | Description | Default |

|

||||

|-----------|-------------|---------|

|

||||

| `<agent\|collector\|query\|ingester>.cmdlineParams` | Additional command line parameters | `nil` |

|

||||

| `<component>.extraEnv` | Additional environment variables | [] |

|

||||

| `<component>.nodeSelector` | Node selector | {} |

|

||||

| `<component>.tolerations` | Node tolerations | [] |

|

||||

| `<component>.affinity` | Affinity | {} |

|

||||

| `<component>.podAnnotations` | Pod annotations | `nil` |

|

||||

| `<component>.podSecurityContext` | Pod security context | {} |

|

||||

| `<component>.securityContext` | Container security context | {} |

|

||||

| `<component>.serviceAccount.create` | Create service account | `true` |

|

||||

| `<component>.serviceAccount.name` | The name of the ServiceAccount to use. If not set and create is true, a name is generated using the fullname template | `nil` |

|

||||

| `<component>.serviceMonitor.enabled` | Create serviceMonitor | `false` |

|

||||

| `<component>.serviceMonitor.additionalLabels` | Add additional labels to serviceMonitor | {} |

|

||||

| `agent.annotations` | Annotations for Agent | `nil` |

|

||||

| `agent.dnsPolicy` | Configure DNS policy for agents | `ClusterFirst` |

|

||||

| `agent.service.annotations` | Annotations for Agent SVC | `nil` |

|

||||

| `agent.service.binaryPort` | jaeger.thrift over binary thrift | `6832` |

|

||||

| `agent.service.compactPort` | jaeger.thrift over compact thrift| `6831` |

|

||||

| `agent.image` | Image for Jaeger Agent | `jaegertracing/jaeger-agent` |

|

||||

| `agent.imagePullSecrets` | Secret to pull the Image for Jaeger Agent | `[]` |

|

||||

| `agent.pullPolicy` | Agent image pullPolicy | `IfNotPresent` |

|

||||

| `agent.service.loadBalancerSourceRanges` | list of IP CIDRs allowed access to load balancer (if supported) | `[]` |

|

||||

| `agent.service.annotations` | Annotations for Agent SVC | `nil` |

|

||||

| `agent.service.binaryPort` | jaeger.thrift over binary thrift | `6832` |

|

||||

| `agent.service.compactPort` | jaeger.thrift over compact thrift | `6831` |

|

||||

| `agent.service.zipkinThriftPort` | zipkin.thrift over compact thrift | `5775` |

|

||||

| `agent.extraConfigmapMounts` | Additional agent configMap mounts | `[]` |

|

||||

| `agent.extraSecretMounts` | Additional agent secret mounts | `[]` |

|

||||

| `agent.useHostNetwork` | Enable hostNetwork for agents | `false` |

|

||||

| `agent.priorityClassName` | Priority class name for the agent pods | `nil` |

|

||||

| `collector.autoscaling.enabled` | Enable horizontal pod autoscaling | `false` |

|

||||

| `collector.autoscaling.minReplicas` | Minimum replicas | 2 |

|

||||

| `collector.autoscaling.maxReplicas` | Maximum replicas | 10 |

|

||||

| `collector.autoscaling.targetCPUUtilizationPercentage` | Target CPU utilization | 80 |

|

||||

| `collector.autoscaling.targetMemoryUtilizationPercentage` | Target memory utilization | `nil` |

|

||||

| `collector.image` | Image for jaeger collector | `jaegertracing/jaeger-collector` |

|

||||

| `collector.imagePullSecrets` | Secret to pull the Image for Jaeger Collector | `[]` |

|

||||

| `collector.pullPolicy` | Collector image pullPolicy | `IfNotPresent` |

|

||||

| `collector.service.annotations` | Annotations for Collector SVC | `nil` |

|

||||

| `collector.service.grpc.port` | Jaeger Agent port for model.proto | `14250` |

|

||||

| `collector.service.http.port` | Client port for HTTP thrift | `14268` |

|

||||

| `collector.service.loadBalancerSourceRanges` | list of IP CIDRs allowed access to load balancer (if supported) | `[]` |

|

||||

| `collector.service.type` | Service type | `ClusterIP` |

|

||||

| `collector.service.zipkin.port` | Zipkin port for JSON/thrift HTTP | `nil` |

|

||||

| `collector.extraConfigmapMounts` | Additional collector configMap mounts | `[]` |

|

||||

| `collector.extraSecretMounts` | Additional collector secret mounts | `[]` |

|

||||

| `collector.samplingConfig` | [Sampling strategies json file](https://www.jaegertracing.io/docs/latest/sampling/#collector-sampling-configuration) | `nil` |

|

||||

| `collector.priorityClassName` | Priority class name for the collector pods | `nil` |

|

||||

| `ingester.enabled` | Enable ingester component, collectors will write to Kafka | `false` |

|

||||

| `ingester.autoscaling.enabled` | Enable horizontal pod autoscaling | `false` |

|

||||

| `ingester.autoscaling.minReplicas` | Minimum replicas | 2 |

|

||||

| `ingester.autoscaling.maxReplicas` | Maximum replicas | 10 |

|

||||

| `ingester.autoscaling.targetCPUUtilizationPercentage` | Target CPU utilization | 80 |

|

||||

| `ingester.autoscaling.targetMemoryUtilizationPercentage` | Target memory utilization | `nil` |

|

||||

| `ingester.service.annotations` | Annotations for Ingester SVC | `nil` |

|

||||

| `ingester.image` | Image for jaeger Ingester | `jaegertracing/jaeger-ingester` |

|

||||

| `ingester.imagePullSecrets` | Secret to pull the Image for Jaeger Ingester | `[]` |

|

||||

| `ingester.pullPolicy` | Ingester image pullPolicy | `IfNotPresent` |

|

||||

| `ingester.service.annotations` | Annotations for Ingester SVC | `nil` |

|

||||

| `ingester.service.loadBalancerSourceRanges` | list of IP CIDRs allowed access to load balancer (if supported) | `[]` |

|

||||

| `ingester.service.type` | Service type | `ClusterIP` |

|

||||

| `ingester.extraConfigmapMounts` | Additional Ingester configMap mounts | `[]` |

|

||||

| `ingester.extraSecretMounts` | Additional Ingester secret mounts | `[]` |

|

||||

| `fullnameOverride` | Override full name | `nil` |

|

||||

| `hotrod.enabled` | Enables the Hotrod demo app | `false` |

|

||||

| `hotrod.service.loadBalancerSourceRanges` | list of IP CIDRs allowed access to load balancer (if supported) | `[]` |

|

||||

| `hotrod.image.pullSecrets` | Secret to pull the Image for the Hotrod demo app | `[]` |

|

||||

| `nameOverride` | Override name| `nil` |

|

||||

| `provisionDataStore.cassandra` | Provision Cassandra Data Store| `true` |

|

||||

| `provisionDataStore.elasticsearch` | Provision Elasticsearch Data Store | `false` |

|

||||

| `provisionDataStore.kafka` | Provision Kafka Data Store | `false` |

|

||||

| `query.agentSidecar.enabled` | Enable agent sidecare for query deployment | `true` |

|

||||

| `query.config` | [UI Config json file](https://www.jaegertracing.io/docs/latest/frontend-ui/) | `nil` |

|

||||

| `query.service.annotations` | Annotations for Query SVC | `nil` |

|

||||

| `query.image` | Image for Jaeger Query UI | `jaegertracing/jaeger-query` |

|

||||

| `query.imagePullSecrets` | Secret to pull the Image for Jaeger Query UI | `[]` |

|

||||

| `query.ingress.enabled` | Allow external traffic access | `false` |

|

||||

| `query.ingress.annotations` | Configure annotations for Ingress | `{}` |

|

||||

| `query.ingress.hosts` | Configure host for Ingress | `nil` |

|

||||

| `query.ingress.tls` | Configure tls for Ingress | `nil` |

|

||||

| `query.pullPolicy` | Query UI image pullPolicy | `IfNotPresent` |

|

||||

| `query.service.loadBalancerSourceRanges` | list of IP CIDRs allowed access to load balancer (if supported) | `[]` |

|

||||

| `query.service.nodePort` | Specific node port to use when type is NodePort | `nil` |

|

||||

| `query.service.port` | External accessible port | `80` |

|

||||

| `query.service.type` | Service type | `ClusterIP` |

|

||||

| `query.basePath` | Base path of Query UI, used for ingress as well (if it is enabled) | `/` |

|

||||

| `query.extraConfigmapMounts` | Additional query configMap mounts | `[]` |

|

||||

| `query.priorityClassName` | Priority class name for the Query UI pods | `nil` |

|

||||

| `schema.annotations` | Annotations for the schema job| `nil` |

|

||||

| `schema.extraConfigmapMounts` | Additional cassandra schema job configMap mounts | `[]` |

|

||||

| `schema.image` | Image to setup cassandra schema | `jaegertracing/jaeger-cassandra-schema` |

|

||||

| `schema.imagePullSecrets` | Secret to pull the Image for the Cassandra schema setup job | `[]` |

|

||||

| `schema.pullPolicy` | Schema image pullPolicy | `IfNotPresent` |

|

||||

| `schema.activeDeadlineSeconds` | Deadline in seconds for cassandra schema creation job to complete | `120` |

|

||||

| `schema.keyspace` | Set explicit keyspace name | `nil` |

|

||||

| `spark.enabled` | Enables the dependencies job| `false` |

|

||||

| `spark.image` | Image for the dependencies job| `jaegertracing/spark-dependencies` |

|

||||

| `spark.imagePullSecrets` | Secret to pull the Image for the Spark dependencies job | `[]` |

|

||||

| `spark.pullPolicy` | Image pull policy of the deps image | `Always` |

|

||||

| `spark.schedule` | Schedule of the cron job | `"49 23 * * *"` |

|

||||

| `spark.successfulJobsHistoryLimit` | Cron job successfulJobsHistoryLimit | `5` |

|

||||

| `spark.failedJobsHistoryLimit` | Cron job failedJobsHistoryLimit | `5` |

|

||||

| `spark.tag` | Tag of the dependencies job image | `latest` |

|

||||

| `spark.extraConfigmapMounts` | Additional spark configMap mounts | `[]` |

|

||||

| `spark.extraSecretMounts` | Additional spark secret mounts | `[]` |

|

||||

| `esIndexCleaner.enabled` | Enables the ElasticSearch indices cleanup job| `false` |

|

||||

| `esIndexCleaner.image` | Image for the ElasticSearch indices cleanup job| `jaegertracing/jaeger-es-index-cleaner` |

|

||||

| `esIndexCleaner.imagePullSecrets` | Secret to pull the Image for the ElasticSearch indices cleanup job | `[]` |

|

||||

| `esIndexCleaner.pullPolicy` | Image pull policy of the ES cleanup image | `Always` |

|

||||

| `esIndexCleaner.numberOfDays` | ElasticSearch indices older than this number (Number of days) would be deleted by the CronJob | `7`

|

||||

| `esIndexCleaner.schedule` | Schedule of the cron job | `"55 23 * * *"` |

|

||||

| `esIndexCleaner.successfulJobsHistoryLimit` | successfulJobsHistoryLimit for ElasticSearch indices cleanup CronJob | `5` |

|

||||

| `esIndexCleaner.failedJobsHistoryLimit` | failedJobsHistoryLimit for ElasticSearch indices cleanup CronJob | `5` |

|

||||

| `esIndexCleaner.tag` | Tag of the dependencies job image | `latest` |

|

||||

| `esIndexCleaner.extraConfigmapMounts` | Additional esIndexCleaner configMap mounts | `[]` |

|

||||

| `esIndexCleaner.extraSecretMounts` | Additional esIndexCleaner secret mounts | `[]` |

|

||||

| `storage.cassandra.env` | Extra cassandra related env vars to be configured on components that talk to cassandra | `cassandra` |

|

||||

| `storage.cassandra.cmdlineParams` | Extra cassandra related command line options to be configured on components that talk to cassandra | `cassandra` |

|

||||

| `storage.cassandra.existingSecret` | Name of existing password secret object (for password authentication | `nil`

|

||||

| `storage.cassandra.host` | Provisioned cassandra host | `cassandra` |

|

||||

| `storage.cassandra.keyspace` | Schema name for cassandra | `jaeger_v1_test` |

|

||||

| `storage.cassandra.password` | Provisioned cassandra password (ignored if storage.cassandra.existingSecret set) | `password` |

|

||||

| `storage.cassandra.port` | Provisioned cassandra port | `9042` |

|

||||

| `storage.cassandra.tls.enabled` | Provisioned cassandra TLS connection enabled | `false` |

|

||||

| `storage.cassandra.tls.secretName` | Provisioned cassandra TLS connection existing secret name (possible keys in secret: `ca-cert.pem`, `client-key.pem`, `client-cert.pem`, `cqlshrc`, `commonName`) | `` |

|

||||

| `storage.cassandra.usePassword` | Use password | `true` |

|

||||

| `storage.cassandra.user` | Provisioned cassandra username | `user` |

|

||||

| `storage.elasticsearch.env` | Extra ES related env vars to be configured on components that talk to ES | `nil` |

|

||||

| `storage.elasticsearch.cmdlineParams` | Extra ES related command line options to be configured on components that talk to ES | `nil` |

|

||||

| `storage.elasticsearch.existingSecret` | Name of existing password secret object (for password authentication | `nil` |

|

||||

| `storage.elasticsearch.existingSecretKey` | Key of the declared password secret | `password` |

|

||||

| `storage.elasticsearch.host` | Provisioned elasticsearch host| `elasticsearch` |

|

||||

| `storage.elasticsearch.password` | Provisioned elasticsearch password (ignored if storage.elasticsearch.existingSecret set | `changeme` |

|

||||

| `storage.elasticsearch.port` | Provisioned elasticsearch port| `9200` |

|

||||

| `storage.elasticsearch.scheme` | Provisioned elasticsearch scheme | `http` |

|

||||

| `storage.elasticsearch.usePassword` | Use password | `true` |

|

||||

| `storage.elasticsearch.user` | Provisioned elasticsearch user| `elastic` |

|

||||

| `storage.elasticsearch.indexPrefix` | Index Prefix for elasticsearch | `nil` |

|

||||

| `storage.elasticsearch.nodesWanOnly` | Only access specified es host | `false` |

|

||||

| `storage.kafka.authentication` | Authentication type used to authenticate with kafka cluster. e.g. none, kerberos, tls | `none` |

|

||||

| `storage.kafka.brokers` | Broker List for Kafka with port | `kafka:9092` |

|

||||

| `storage.kafka.topic` | Topic name for Kafka | `jaeger_v1_test` |

|

||||

| `storage.type` | Storage type (ES or Cassandra)| `cassandra` |

|

||||

| `tag` | Image tag/version | `1.18.0` |

|

||||

|

||||

For more information about some of the tunable parameters that Cassandra provides, please visit the helm chart for [cassandra](https://github.com/kubernetes/charts/tree/master/incubator/cassandra) and the official [website](http://cassandra.apache.org/) at apache.org.

|

||||

|

||||

For more information about some of the tunable parameters that Jaeger provides, please visit the official [Jaeger repo](https://github.com/uber/jaeger) at GitHub.com.

|

||||

|

||||

### Pending enhancements

|

||||

|

||||

- [ ] Sidecar deployment support

|

||||

Binary file not shown.

|

|

@ -1,17 +0,0 @@

|

|||

# Patterns to ignore when building packages.

|

||||

# This supports shell glob matching, relative path matching, and

|

||||

# negation (prefixed with !). Only one pattern per line.

|

||||

.DS_Store

|

||||

# Common VCS dirs

|

||||

.git/

|

||||

.gitignore

|

||||

# Common backup files

|

||||

*.swp

|

||||

*.bak

|

||||

*.tmp

|

||||

*~

|

||||

# Various IDEs

|

||||

.project

|

||||

.idea/

|

||||

*.tmproj

|

||||

OWNERS

|

||||

|

|

@ -1,19 +0,0 @@

|

|||

apiVersion: v1

|

||||

appVersion: 3.11.6

|

||||

description: Apache Cassandra is a free and open-source distributed database management

|

||||

system designed to handle large amounts of data across many commodity servers, providing

|

||||

high availability with no single point of failure.

|

||||

engine: gotpl

|

||||

home: http://cassandra.apache.org

|

||||

icon: https://upload.wikimedia.org/wikipedia/commons/thumb/5/5e/Cassandra_logo.svg/330px-Cassandra_logo.svg.png

|

||||

keywords:

|

||||

- cassandra

|

||||

- database

|

||||

- nosql

|

||||

maintainers:

|

||||

- email: goonohc@gmail.com

|

||||

name: KongZ

|

||||

- email: maor.friedman@redhat.com

|

||||

name: maorfr

|

||||

name: cassandra

|

||||

version: 0.15.2

|

||||

|

|

@ -1,218 +0,0 @@

|

|||

# Cassandra

|

||||

A Cassandra Chart for Kubernetes

|

||||

|

||||

## Install Chart

|

||||

To install the Cassandra Chart into your Kubernetes cluster (This Chart requires persistent volume by default, you may need to create a storage class before install chart. To create storage class, see [Persist data](#persist_data) section)

|

||||

|

||||

```bash

|

||||

helm install --namespace "cassandra" -n "cassandra" incubator/cassandra

|

||||

```

|

||||

|

||||

After installation succeeds, you can get a status of Chart

|

||||

|

||||

```bash

|

||||

helm status "cassandra"

|

||||

```

|

||||

|

||||

If you want to delete your Chart, use this command

|

||||

```bash

|

||||

helm delete --purge "cassandra"

|

||||

```

|

||||

|

||||

## Upgrading

|

||||

|

||||

To upgrade your Cassandra release, simply run

|

||||

|

||||

```bash

|

||||

helm upgrade "cassandra" incubator/cassandra

|

||||

```

|

||||

|

||||

### 0.12.0

|

||||

|

||||

This version fixes https://github.com/helm/charts/issues/7803 by removing mutable labels in `spec.VolumeClaimTemplate.metadata.labels` so that it is upgradable.

|

||||

|

||||

Until this version, in order to upgrade, you have to delete the Cassandra StatefulSet before upgrading:

|

||||

```bash

|

||||

$ kubectl delete statefulset --cascade=false my-cassandra-release

|

||||

```

|

||||

|

||||

|

||||

## Persist data

|

||||

You need to create `StorageClass` before able to persist data in persistent volume.

|

||||

To create a `StorageClass` on Google Cloud, run the following

|

||||

|

||||

```bash

|

||||

kubectl create -f sample/create-storage-gce.yaml

|

||||

```

|

||||

|

||||

And set the following values in `values.yaml`

|

||||

|

||||

```yaml

|

||||

persistence:

|

||||

enabled: true

|

||||

```

|

||||

|

||||

If you want to create a `StorageClass` on other platform, please see documentation here [https://kubernetes.io/docs/user-guide/persistent-volumes/](https://kubernetes.io/docs/user-guide/persistent-volumes/)

|

||||

|

||||

When running a cluster without persistence, the termination of a pod will first initiate a decommissioning of that pod.

|

||||

Depending on the amount of data stored inside the cluster this may take a while. In order to complete a graceful

|

||||

termination, pods need to get more time for it. Set the following values in `values.yaml`:

|

||||

|

||||

```yaml

|

||||

podSettings:

|

||||

terminationGracePeriodSeconds: 1800

|

||||

```

|

||||

|

||||

## Install Chart with specific cluster size

|

||||

By default, this Chart will create a cassandra with 3 nodes. If you want to change the cluster size during installation, you can use `--set config.cluster_size={value}` argument. Or edit `values.yaml`

|

||||

|

||||

For example:

|

||||

Set cluster size to 5

|

||||

|

||||

```bash

|

||||

helm install --namespace "cassandra" -n "cassandra" --set config.cluster_size=5 incubator/cassandra/

|

||||

```

|

||||

|

||||

## Install Chart with specific resource size

|

||||

By default, this Chart will create a cassandra with CPU 2 vCPU and 4Gi of memory which is suitable for development environment.

|

||||

If you want to use this Chart for production, I would recommend to update the CPU to 4 vCPU and 16Gi. Also increase size of `max_heap_size` and `heap_new_size`.

|

||||

To update the settings, edit `values.yaml`

|

||||

|

||||

## Install Chart with specific node

|

||||

Sometime you may need to deploy your cassandra to specific nodes to allocate resources. You can use node selector by edit `nodes.enabled=true` in `values.yaml`

|

||||

For example, you have 6 vms in node pools and you want to deploy cassandra to node which labeled as `cloud.google.com/gke-nodepool: pool-db`

|

||||

|

||||

Set the following values in `values.yaml`

|

||||

|

||||

```yaml

|

||||

nodes:

|

||||

enabled: true

|

||||

selector:

|

||||

nodeSelector:

|

||||

cloud.google.com/gke-nodepool: pool-db

|

||||

```

|

||||

|

||||

## Configuration

|

||||

|

||||

The following table lists the configurable parameters of the Cassandra chart and their default values.

|

||||

|

||||

| Parameter | Description | Default |

|

||||

| ----------------------- | --------------------------------------------- | ---------------------------------------------------------- |

|

||||

| `image.repo` | `cassandra` image repository | `cassandra` |

|

||||

| `image.tag` | `cassandra` image tag | `3.11.5` |

|

||||

| `image.pullPolicy` | Image pull policy | `Always` if `imageTag` is `latest`, else `IfNotPresent` |

|

||||

| `image.pullSecrets` | Image pull secrets | `nil` |

|

||||

| `config.cluster_domain` | The name of the cluster domain. | `cluster.local` |

|

||||

| `config.cluster_name` | The name of the cluster. | `cassandra` |

|

||||

| `config.cluster_size` | The number of nodes in the cluster. | `3` |

|

||||

| `config.seed_size` | The number of seed nodes used to bootstrap new clients joining the cluster. | `2` |

|

||||

| `config.seeds` | The comma-separated list of seed nodes. | Automatically generated according to `.Release.Name` and `config.seed_size` |

|

||||

| `config.num_tokens` | Initdb Arguments | `256` |

|

||||

| `config.dc_name` | Initdb Arguments | `DC1` |

|

||||

| `config.rack_name` | Initdb Arguments | `RAC1` |

|

||||

| `config.endpoint_snitch` | Initdb Arguments | `SimpleSnitch` |

|

||||

| `config.max_heap_size` | Initdb Arguments | `2048M` |

|

||||

| `config.heap_new_size` | Initdb Arguments | `512M` |

|

||||

| `config.ports.cql` | Initdb Arguments | `9042` |

|

||||

| `config.ports.thrift` | Initdb Arguments | `9160` |

|

||||

| `config.ports.agent` | The port of the JVM Agent (if any) | `nil` |

|

||||

| `config.start_rpc` | Initdb Arguments | `false` |

|

||||

| `configOverrides` | Overrides config files in /etc/cassandra dir | `{}` |

|

||||

| `commandOverrides` | Overrides default docker command | `[]` |

|

||||

| `argsOverrides` | Overrides default docker args | `[]` |

|

||||

| `env` | Custom env variables | `{}` |

|

||||

| `schedulerName` | Name of k8s scheduler (other than the default) | `nil` |

|

||||

| `persistence.enabled` | Use a PVC to persist data | `true` |

|

||||

| `persistence.storageClass` | Storage class of backing PVC | `nil` (uses alpha storage class annotation) |

|

||||

| `persistence.accessMode` | Use volume as ReadOnly or ReadWrite | `ReadWriteOnce` |

|

||||

| `persistence.size` | Size of data volume | `10Gi` |

|

||||

| `resources` | CPU/Memory resource requests/limits | Memory: `4Gi`, CPU: `2` |

|

||||

| `service.type` | k8s service type exposing ports, e.g. `NodePort`| `ClusterIP` |

|

||||

| `service.annotations` | Annotations to apply to cassandra service | `""` |

|

||||

| `podManagementPolicy` | podManagementPolicy of the StatefulSet | `OrderedReady` |

|

||||

| `podDisruptionBudget` | Pod distruption budget | `{}` |

|

||||

| `podAnnotations` | pod annotations for the StatefulSet | `{}` |

|

||||

| `updateStrategy.type` | UpdateStrategy of the StatefulSet | `OnDelete` |

|

||||

| `livenessProbe.initialDelaySeconds` | Delay before liveness probe is initiated | `90` |

|

||||

| `livenessProbe.periodSeconds` | How often to perform the probe | `30` |

|

||||

| `livenessProbe.timeoutSeconds` | When the probe times out | `5` |

|

||||

| `livenessProbe.successThreshold` | Minimum consecutive successes for the probe to be considered successful after having failed. | `1` |

|

||||

| `livenessProbe.failureThreshold` | Minimum consecutive failures for the probe to be considered failed after having succeeded. | `3` |

|

||||

| `readinessProbe.initialDelaySeconds` | Delay before readiness probe is initiated | `90` |

|

||||

| `readinessProbe.periodSeconds` | How often to perform the probe | `30` |

|

||||

| `readinessProbe.timeoutSeconds` | When the probe times out | `5` |

|

||||

| `readinessProbe.successThreshold` | Minimum consecutive successes for the probe to be considered successful after having failed. | `1` |

|

||||

| `readinessProbe.failureThreshold` | Minimum consecutive failures for the probe to be considered failed after having succeeded. | `3` |

|

||||

| `readinessProbe.address` | Address to use for checking node has joined the cluster and is ready. | `${POD_IP}` |

|

||||

| `rbac.create` | Specifies whether RBAC resources should be created | `true` |

|

||||

| `serviceAccount.create` | Specifies whether a ServiceAccount should be created | `true` |

|

||||

| `serviceAccount.name` | The name of the ServiceAccount to use | |

|

||||

| `backup.enabled` | Enable backup on chart installation | `false` |

|

||||

| `backup.schedule` | Keyspaces to backup, each with cron time | |

|

||||

| `backup.annotations` | Backup pod annotations | iam.amazonaws.com/role: `cain` |

|

||||

| `backup.image.repository` | Backup image repository | `maorfr/cain` |

|

||||

| `backup.image.tag` | Backup image tag | `0.6.0` |

|

||||

| `backup.extraArgs` | Additional arguments for cain | `[]` |

|

||||

| `backup.env` | Backup environment variables | AWS_REGION: `us-east-1` |

|

||||

| `backup.resources` | Backup CPU/Memory resource requests/limits | Memory: `1Gi`, CPU: `1` |

|

||||

| `backup.destination` | Destination to store backup artifacts | `s3://bucket/cassandra` |

|

||||

| `backup.google.serviceAccountSecret` | Secret containing credentials if GCS is used as destination | |

|

||||

| `exporter.enabled` | Enable Cassandra exporter | `false` |

|

||||

| `exporter.servicemonitor.enabled` | Enable ServiceMonitor for exporter | `true` |

|

||||

| `exporter.servicemonitor.additionalLabels`| Additional labels for Service Monitor | `{}` |

|

||||

| `exporter.image.repo` | Exporter image repository | `criteord/cassandra_exporter` |

|

||||

| `exporter.image.tag` | Exporter image tag | `2.0.2` |

|

||||

| `exporter.port` | Exporter port | `5556` |

|

||||

| `exporter.jvmOpts` | Exporter additional JVM options | |

|

||||

| `exporter.resources` | Exporter CPU/Memory resource requests/limits | `{}` |

|

||||

| `extraContainers` | Sidecar containers for the pods | `[]` |

|

||||

| `extraVolumes` | Additional volumes for the pods | `[]` |

|

||||

| `extraVolumeMounts` | Extra volume mounts for the pods | `[]` |

|

||||

| `affinity` | Kubernetes node affinity | `{}` |

|

||||

| `tolerations` | Kubernetes node tolerations | `[]` |

|

||||

|

||||

|

||||

## Scale cassandra

|

||||

When you want to change the cluster size of your cassandra, you can use the helm upgrade command.

|

||||

|

||||

```bash

|

||||

helm upgrade --set config.cluster_size=5 cassandra incubator/cassandra

|

||||

```

|

||||

|

||||

## Get cassandra status

|

||||

You can get your cassandra cluster status by running the command

|

||||

|

||||

```bash

|

||||

kubectl exec -it --namespace cassandra $(kubectl get pods --namespace cassandra -l app=cassandra-cassandra -o jsonpath='{.items[0].metadata.name}') nodetool status

|

||||

```

|

||||

|

||||

Output

|

||||

```bash

|

||||

Datacenter: asia-east1

|

||||

======================

|

||||

Status=Up/Down

|

||||

|/ State=Normal/Leaving/Joining/Moving

|

||||

-- Address Load Tokens Owns (effective) Host ID Rack

|

||||

UN 10.8.1.11 108.45 KiB 256 66.1% 410cc9da-8993-4dc2-9026-1dd381874c54 a

|

||||

UN 10.8.4.12 84.08 KiB 256 68.7% 96e159e1-ef94-406e-a0be-e58fbd32a830 c

|

||||

UN 10.8.3.6 103.07 KiB 256 65.2% 1a42b953-8728-4139-b070-b855b8fff326 b

|

||||

```

|

||||

|

||||

## Benchmark

|

||||

You can use [cassandra-stress](https://docs.datastax.com/en/cassandra/3.0/cassandra/tools/toolsCStress.html) tool to run the benchmark on the cluster by the following command

|

||||

|

||||

```bash

|

||||

kubectl exec -it --namespace cassandra $(kubectl get pods --namespace cassandra -l app=cassandra-cassandra -o jsonpath='{.items[0].metadata.name}') cassandra-stress

|

||||

```

|

||||

|

||||

Example of `cassandra-stress` argument

|

||||

- Run both read and write with ration 9:1

|

||||

- Operator total 1 million keys with uniform distribution

|

||||

- Use QUORUM for read/write

|

||||

- Generate 50 threads

|

||||

- Generate result in graph

|

||||

- Use NetworkTopologyStrategy with replica factor 2

|

||||

|

||||

```bash

|

||||

cassandra-stress mixed ratio\(write=1,read=9\) n=1000000 cl=QUORUM -pop dist=UNIFORM\(1..1000000\) -mode native cql3 -rate threads=50 -log file=~/mixed_autorate_r9w1_1M.log -graph file=test2.html title=test revision=test2 -schema "replication(strategy=NetworkTopologyStrategy, factor=2)"

|

||||

```

|

||||

|

|

@ -1,7 +0,0 @@

|

|||

kind: StorageClass

|

||||

apiVersion: storage.k8s.io/v1

|

||||

metadata:

|

||||

name: generic

|

||||

provisioner: kubernetes.io/gce-pd

|

||||

parameters:

|

||||

type: pd-ssd

|

||||

|

|

@ -1,35 +0,0 @@

|

|||

Cassandra CQL can be accessed via port {{ .Values.config.ports.cql }} on the following DNS name from within your cluster:

|

||||

Cassandra Thrift can be accessed via port {{ .Values.config.ports.thrift }} on the following DNS name from within your cluster:

|

||||

|

||||

If you want to connect to the remote instance with your local Cassandra CQL cli. To forward the API port to localhost:9042 run the following:

|

||||

- kubectl port-forward --namespace {{ .Release.Namespace }} $(kubectl get pods --namespace {{ .Release.Namespace }} -l app={{ template "cassandra.name" . }},release={{ .Release.Name }} -o jsonpath='{ .items[0].metadata.name }') 9042:{{ .Values.config.ports.cql }}

|

||||

|

||||

If you want to connect to the Cassandra CQL run the following:

|

||||

{{- if contains "NodePort" .Values.service.type }}

|

||||

- export CQL_PORT=$(kubectl get --namespace {{ .Release.Namespace }} -o jsonpath="{.spec.ports[0].nodePort}" services {{ template "cassandra.fullname" . }})

|

||||

- export CQL_HOST=$(kubectl get nodes --namespace {{ .Release.Namespace }} -o jsonpath="{.items[0].status.addresses[0].address}")

|

||||

- cqlsh $CQL_HOST $CQL_PORT

|

||||

|

||||

{{- else if contains "LoadBalancer" .Values.service.type }}

|

||||

NOTE: It may take a few minutes for the LoadBalancer IP to be available.

|

||||

Watch the status with: 'kubectl get svc --namespace {{ .Release.Namespace }} -w {{ template "cassandra.fullname" . }}'

|

||||

- export SERVICE_IP=$(kubectl get svc --namespace {{ .Release.Namespace }} {{ template "cassandra.fullname" . }} -o jsonpath='{.status.loadBalancer.ingress[0].ip}')

|

||||

- echo cqlsh $SERVICE_IP

|

||||

{{- else if contains "ClusterIP" .Values.service.type }}

|

||||

- kubectl port-forward --namespace {{ .Release.Namespace }} $(kubectl get pods --namespace {{ .Release.Namespace }} -l "app={{ template "cassandra.name" . }},release={{ .Release.Name }}" -o jsonpath="{.items[0].metadata.name}") 9042:{{ .Values.config.ports.cql }}

|

||||

echo cqlsh 127.0.0.1 9042

|

||||

{{- end }}

|

||||

|

||||

You can also see the cluster status by run the following:

|

||||

- kubectl exec -it --namespace {{ .Release.Namespace }} $(kubectl get pods --namespace {{ .Release.Namespace }} -l app={{ template "cassandra.name" . }},release={{ .Release.Name }} -o jsonpath='{.items[0].metadata.name}') nodetool status

|

||||

|

||||

To tail the logs for the Cassandra pod run the following:

|

||||

- kubectl logs -f --namespace {{ .Release.Namespace }} $(kubectl get pods --namespace {{ .Release.Namespace }} -l app={{ template "cassandra.name" . }},release={{ .Release.Name }} -o jsonpath='{ .items[0].metadata.name }')

|

||||

|

||||

{{- if not .Values.persistence.enabled }}

|

||||

|

||||

Note that the cluster is running with node-local storage instead of PersistentVolumes. In order to prevent data loss,

|

||||

pods will be decommissioned upon termination. Decommissioning may take some time, so you might also want to adjust the

|

||||

pod termination gace period, which is currently set to {{ .Values.podSettings.terminationGracePeriodSeconds }} seconds.

|

||||

|

||||

{{- end}}

|

||||

|

|

@ -1,43 +0,0 @@

|

|||

{{/* vim: set filetype=mustache: */}}

|

||||

{{/*

|

||||

Expand the name of the chart.

|

||||

*/}}

|

||||

{{- define "cassandra.name" -}}

|

||||

{{- default .Chart.Name .Values.nameOverride | trunc 63 | trimSuffix "-" -}}

|

||||

{{- end -}}

|

||||

|

||||

{{/*

|

||||

Create a default fully qualified app name.

|

||||

We truncate at 63 chars because some Kubernetes name fields are limited to this (by the DNS naming spec).

|

||||

If release name contains chart name it will be used as a full name.

|

||||

*/}}

|

||||

{{- define "cassandra.fullname" -}}

|

||||

{{- if .Values.fullnameOverride -}}

|

||||

{{- .Values.fullnameOverride | trunc 63 | trimSuffix "-" -}}

|

||||

{{- else -}}

|

||||

{{- $name := default .Chart.Name .Values.nameOverride -}}

|

||||

{{- if contains $name .Release.Name -}}

|

||||

{{- .Release.Name | trunc 63 | trimSuffix "-" -}}

|

||||

{{- else -}}

|

||||

{{- printf "%s-%s" .Release.Name $name | trunc 63 | trimSuffix "-" -}}

|

||||

{{- end -}}

|

||||

{{- end -}}

|

||||

{{- end -}}

|

||||

|

||||

{{/*

|

||||

Create chart name and version as used by the chart label.

|

||||

*/}}

|

||||

{{- define "cassandra.chart" -}}

|

||||

{{- printf "%s-%s" .Chart.Name .Chart.Version | replace "+" "_" | trunc 63 | trimSuffix "-" -}}

|

||||

{{- end -}}

|

||||

|

||||

{{/*

|

||||

Create the name of the service account to use

|

||||

*/}}

|

||||

{{- define "cassandra.serviceAccountName" -}}

|

||||

{{- if .Values.serviceAccount.create -}}

|

||||

{{ default (include "cassandra.fullname" .) .Values.serviceAccount.name }}

|

||||

{{- else -}}

|

||||

{{ default "default" .Values.serviceAccount.name }}

|

||||

{{- end -}}

|

||||

{{- end -}}

|

||||

|

|

@ -1,90 +0,0 @@

|

|||

{{- if .Values.backup.enabled }}

|

||||

{{- $release := .Release }}

|

||||

{{- $values := .Values }}

|

||||

{{- $backup := $values.backup }}

|

||||

{{- range $index, $schedule := $backup.schedule }}

|

||||

---

|

||||

apiVersion: batch/v1beta1

|

||||

kind: CronJob

|

||||

metadata:

|

||||

name: {{ template "cassandra.fullname" $ }}-backup-{{ $schedule.keyspace | replace "_" "-" }}

|

||||

labels:

|

||||

app: {{ template "cassandra.name" $ }}-cain

|

||||

chart: {{ template "cassandra.chart" $ }}

|

||||

release: "{{ $release.Name }}"

|

||||

heritage: "{{ $release.Service }}"

|

||||

spec:

|

||||

schedule: {{ $schedule.cron | quote }}

|

||||

concurrencyPolicy: Forbid

|

||||

startingDeadlineSeconds: 120

|

||||

jobTemplate:

|

||||

spec:

|

||||

template:

|

||||

metadata:

|

||||

annotations:

|

||||

{{ toYaml $backup.annotations }}

|

||||

spec:

|

||||

restartPolicy: OnFailure

|

||||

serviceAccountName: {{ template "cassandra.serviceAccountName" $ }}

|

||||

containers:

|

||||

- name: cassandra-backup

|

||||

image: "{{ $backup.image.repository }}:{{ $backup.image.tag }}"

|

||||

command: ["cain"]

|

||||

args:

|

||||

- backup

|

||||

- --namespace

|

||||

- {{ $release.Namespace }}

|

||||

- --selector

|

||||

- release={{ $release.Name }},app={{ template "cassandra.name" $ }}

|

||||

- --keyspace

|

||||

- {{ $schedule.keyspace }}

|

||||

- --dst

|

||||

- {{ $backup.destination }}

|

||||

{{- with $backup.extraArgs }}

|

||||

{{ toYaml . | indent 12 }}

|

||||

{{- end }}

|

||||

env:

|

||||

{{- if $backup.google.serviceAccountSecret }}

|

||||

- name: GOOGLE_APPLICATION_CREDENTIALS

|

||||

value: "/etc/secrets/google/credentials.json"

|

||||

{{- end }}

|

||||

{{- with $backup.env }}

|

||||

{{ toYaml . | indent 12 }}

|

||||

{{- end }}

|

||||

{{- with $backup.resources }}

|

||||

resources:

|

||||

{{ toYaml . | indent 14 }}

|

||||

{{- end }}

|

||||

{{- if $backup.google.serviceAccountSecret }}

|

||||

volumeMounts:

|

||||

- name: google-service-account

|

||||

mountPath: /etc/secrets/google/

|

||||

{{- end }}

|

||||

{{- if $backup.google.serviceAccountSecret }}

|

||||

volumes:

|

||||

- name: google-service-account

|

||||

secret:

|

||||

secretName: {{ $backup.google.serviceAccountSecret | quote }}

|

||||

{{- end }}

|

||||

affinity:

|

||||

podAffinity:

|

||||

preferredDuringSchedulingIgnoredDuringExecution:

|

||||

- weight: 1

|

||||

podAffinityTerm:

|

||||

labelSelector:

|

||||

matchExpressions:

|

||||

- key: app

|

||||

operator: In

|

||||

values:

|

||||

- {{ template "cassandra.fullname" $ }}

|

||||

- key: release

|

||||

operator: In

|

||||

values:

|

||||

- {{ $release.Name }}

|

||||

topologyKey: "kubernetes.io/hostname"

|

||||

{{- with $values.tolerations }}

|

||||

tolerations:

|

||||

{{ toYaml . | indent 12 }}

|

||||

{{- end }}

|

||||

{{- end }}

|

||||

{{- end }}

|

||||

|

|

@ -1,50 +0,0 @@

|

|||

{{- if .Values.backup.enabled }}

|

||||

{{- if .Values.serviceAccount.create }}

|

||||

apiVersion: v1

|

||||

kind: ServiceAccount

|

||||

metadata:

|

||||

name: {{ template "cassandra.serviceAccountName" . }}

|

||||

labels:

|

||||

app: {{ template "cassandra.name" . }}

|

||||

chart: {{ template "cassandra.chart" . }}

|

||||

release: "{{ .Release.Name }}"

|

||||

heritage: "{{ .Release.Service }}"

|

||||

---

|

||||

{{- end }}

|

||||

{{- if .Values.rbac.create }}

|

||||

apiVersion: rbac.authorization.k8s.io/v1

|

||||

kind: Role

|

||||

metadata:

|

||||

name: {{ template "cassandra.fullname" . }}-backup

|

||||

labels:

|

||||

app: {{ template "cassandra.name" . }}

|

||||

chart: {{ template "cassandra.chart" . }}

|

||||

release: "{{ .Release.Name }}"

|

||||

heritage: "{{ .Release.Service }}"

|

||||

rules:

|

||||

- apiGroups: [""]

|

||||

resources: ["pods", "pods/log"]

|

||||

verbs: ["get", "list"]

|

||||

- apiGroups: [""]

|

||||

resources: ["pods/exec"]

|

||||

verbs: ["create"]

|

||||

---

|

||||

apiVersion: rbac.authorization.k8s.io/v1

|

||||

kind: RoleBinding

|

||||

metadata:

|

||||

name: {{ template "cassandra.fullname" . }}-backup

|

||||

labels:

|

||||

app: {{ template "cassandra.name" . }}

|

||||

chart: {{ template "cassandra.chart" . }}

|

||||

release: "{{ .Release.Name }}"

|

||||

heritage: "{{ .Release.Service }}"

|

||||

roleRef:

|

||||

apiGroup: rbac.authorization.k8s.io

|

||||

kind: Role

|

||||

name: {{ template "cassandra.fullname" . }}-backup

|

||||

subjects:

|

||||

- kind: ServiceAccount

|

||||

name: {{ template "cassandra.serviceAccountName" . }}

|

||||

namespace: {{ .Release.Namespace }}

|

||||

{{- end }}

|

||||

{{- end }}

|

||||

|

|

@ -1,14 +0,0 @@

|

|||

{{- if .Values.configOverrides }}

|

||||

kind: ConfigMap

|

||||

apiVersion: v1

|

||||

metadata:

|

||||

name: {{ template "cassandra.name" . }}

|

||||

namespace: {{ .Release.Namespace }}

|

||||

labels:

|

||||

app: {{ template "cassandra.name" . }}

|

||||

chart: {{ .Chart.Name }}-{{ .Chart.Version | replace "+" "_" }}

|

||||

release: {{ .Release.Name }}

|

||||

heritage: {{ .Release.Service }}

|

||||

data:

|

||||

{{ toYaml .Values.configOverrides | indent 2 }}

|

||||

{{- end }}

|

||||

|

|

@ -1,18 +0,0 @@

|

|||

{{- if .Values.podDisruptionBudget -}}

|

||||

apiVersion: policy/v1beta1

|

||||

kind: PodDisruptionBudget

|

||||

metadata:

|

||||

labels:

|

||||

app: {{ template "cassandra.name" . }}

|

||||

chart: {{ .Chart.Name }}-{{ .Chart.Version }}

|

||||

heritage: {{ .Release.Service }}

|

||||

release: {{ .Release.Name }}

|

||||

name: {{ template "cassandra.fullname" . }}

|

||||

namespace: {{ .Release.Namespace }}

|

||||

spec:

|

||||

selector:

|

||||

matchLabels:

|

||||

app: {{ template "cassandra.name" . }}

|

||||

release: {{ .Release.Name }}

|

||||

{{ toYaml .Values.podDisruptionBudget | indent 2 }}

|

||||

{{- end -}}

|

||||

|

|

@ -1,46 +0,0 @@

|

|||

apiVersion: v1

|

||||

kind: Service

|

||||

metadata:

|

||||

name: {{ template "cassandra.fullname" . }}

|

||||

namespace: {{ .Release.Namespace }}

|

||||

labels:

|

||||

app: {{ template "cassandra.name" . }}

|

||||

chart: {{ template "cassandra.chart" . }}

|

||||

release: {{ .Release.Name }}

|

||||

heritage: {{ .Release.Service }}

|

||||

{{- with .Values.service.annotations }}

|

||||

annotations:

|

||||

{{- toYaml . | nindent 4 }}

|

||||

{{- end }}

|

||||

spec:

|

||||

clusterIP: None

|

||||

type: {{ .Values.service.type }}

|

||||

ports:

|

||||

{{- if .Values.exporter.enabled }}

|

||||

- name: metrics

|

||||

port: 5556

|

||||

targetPort: {{ .Values.exporter.port }}

|

||||

{{- end }}

|

||||

- name: intra

|

||||

port: 7000

|

||||

targetPort: 7000

|

||||

- name: tls

|

||||

port: 7001

|

||||

targetPort: 7001

|

||||

- name: jmx

|

||||

port: 7199

|

||||

targetPort: 7199

|

||||

- name: cql

|

||||

port: {{ default 9042 .Values.config.ports.cql }}

|

||||

targetPort: {{ default 9042 .Values.config.ports.cql }}

|

||||

- name: thrift

|

||||

port: {{ default 9160 .Values.config.ports.thrift }}

|

||||

targetPort: {{ default 9160 .Values.config.ports.thrift }}

|

||||

{{- if .Values.config.ports.agent }}

|

||||

- name: agent

|

||||

port: {{ .Values.config.ports.agent }}

|

||||

targetPort: {{ .Values.config.ports.agent }}

|

||||

{{- end }}

|

||||

selector:

|

||||

app: {{ template "cassandra.name" . }}

|

||||

release: {{ .Release.Name }}

|

||||

|

|

@ -1,25 +0,0 @@

|

|||

{{- if and .Values.exporter.enabled .Values.exporter.serviceMonitor.enabled }}

|

||||

apiVersion: monitoring.coreos.com/v1

|

||||

kind: ServiceMonitor

|

||||

metadata:

|

||||

name: {{ template "cassandra.fullname" . }}

|

||||

namespace: {{ .Release.Namespace }}

|

||||

labels:

|

||||

app: {{ template "cassandra.name" . }}

|

||||

chart: {{ template "cassandra.chart" . }}

|

||||

release: {{ .Release.Name }}

|

||||

heritage: {{ .Release.Service }}

|

||||

{{- if .Values.exporter.serviceMonitor.additionalLabels }}

|

||||

{{ toYaml .Values.exporter.serviceMonitor.additionalLabels | indent 4 }}

|

||||

{{- end }}

|

||||

spec:

|

||||

jobLabel: {{ template "cassandra.name" . }}

|

||||

endpoints:

|

||||

- port: metrics

|

||||

interval: 10s

|

||||

selector:

|

||||

matchLabels:

|

||||

app: {{ template "cassandra.name" . }}

|

||||

namespaceSelector:

|

||||

any: true

|

||||

{{- end }}

|

||||

|

|

@ -1,230 +0,0 @@

|

|||

apiVersion: apps/v1

|

||||

kind: StatefulSet

|

||||

metadata:

|

||||

name: {{ template "cassandra.fullname" . }}

|

||||

namespace: {{ .Release.Namespace }}

|

||||

labels:

|

||||

app: {{ template "cassandra.name" . }}

|

||||

chart: {{ template "cassandra.chart" . }}

|

||||

release: {{ .Release.Name }}

|

||||

heritage: {{ .Release.Service }}

|

||||

spec:

|

||||

selector:

|

||||

matchLabels:

|

||||

app: {{ template "cassandra.name" . }}

|

||||

release: {{ .Release.Name }}

|

||||

serviceName: {{ template "cassandra.fullname" . }}

|

||||

replicas: {{ .Values.config.cluster_size }}

|

||||

podManagementPolicy: {{ .Values.podManagementPolicy }}

|

||||

updateStrategy:

|

||||

type: {{ .Values.updateStrategy.type }}

|

||||

template:

|

||||

metadata:

|

||||

labels:

|

||||

app: {{ template "cassandra.name" . }}

|

||||

release: {{ .Release.Name }}

|

||||

{{- if .Values.podLabels }}

|

||||

{{ toYaml .Values.podLabels | indent 8 }}

|

||||

{{- end }}

|

||||

{{- if .Values.podAnnotations }}

|

||||

annotations:

|

||||

{{ toYaml .Values.podAnnotations | indent 8 }}

|

||||

{{- end }}

|

||||

spec:

|

||||

{{- if .Values.schedulerName }}

|

||||

schedulerName: "{{ .Values.schedulerName }}"

|

||||

{{- end }}

|

||||

hostNetwork: {{ .Values.hostNetwork }}

|

||||

{{- if .Values.selector }}

|

||||

{{ toYaml .Values.selector | indent 6 }}

|

||||

{{- end }}

|

||||

{{- if .Values.securityContext.enabled }}

|

||||

securityContext:

|

||||

fsGroup: {{ .Values.securityContext.fsGroup }}

|

||||

runAsUser: {{ .Values.securityContext.runAsUser }}

|

||||

{{- end }}

|

||||

{{- if .Values.affinity }}

|

||||

affinity:

|

||||

{{ toYaml .Values.affinity | indent 8 }}

|

||||

{{- end }}

|

||||

{{- if .Values.tolerations }}

|

||||

tolerations:

|

||||

{{ toYaml .Values.tolerations | indent 8 }}

|

||||

{{- end }}

|

||||

{{- if .Values.configOverrides }}

|

||||

initContainers:

|

||||

- name: config-copier

|

||||

image: busybox

|

||||

command: [ 'sh', '-c', 'cp /configmap-files/* /cassandra-configs/ && chown 999:999 /cassandra-configs/*']

|

||||

volumeMounts:

|

||||

{{- range $key, $value := .Values.configOverrides }}

|

||||

- name: cassandra-config-{{ $key | replace "." "-" | replace "_" "--" }}

|

||||

mountPath: /configmap-files/{{ $key }}

|

||||

subPath: {{ $key }}

|

||||

{{- end }}

|

||||

- name: cassandra-configs

|

||||

mountPath: /cassandra-configs/

|

||||

{{- end }}

|

||||

containers:

|

||||

{{- if .Values.extraContainers }}

|

||||

{{ tpl (toYaml .Values.extraContainers) . | indent 6}}

|

||||

{{- end }}

|

||||

{{- if .Values.exporter.enabled }}

|

||||

- name: cassandra-exporter

|

||||

image: "{{ .Values.exporter.image.repo }}:{{ .Values.exporter.image.tag }}"

|

||||

resources:

|

||||

{{ toYaml .Values.exporter.resources | indent 10 }}

|

||||

env:

|

||||